Preamble

._Fil%C3%B3sofo_ingl%C3%AAs%2C_tamb%C3%A9m_conhecido_como_o_%22doutor_invenc%C3%ADvel%22_(Doctor_Invincibilis)_e_o_%22iniciador_vener%C3%A1vel%22_(Venerabilis_Inceptor)%2C.jpg) |

| Occam (Wikipedia) |

A misconception that overfitted model can be identified with the amount of

generalisation gap between model's training and test sets over its learning curves is still out there. Even in some prominent online lectures and blog posts, this misconception is now repeated without critical look. In general, this practice unfortunately diffuse into some academic papers and industrial, practitioners attribute poor generalisation to overfitting. We have provided a resolution of this via a new conceptual identification of complexity plots, so called

Occam's curves differentiating from a learning curve. An accessible mathematical definitions here will clarify the resolution of the confusion.

Learning Curve Setting: Generalisation Gap

Learning curves explain how a given algorithm's generalisation improves over time or experience, originating from Ebbinghaus's work on human memory. We use inductive bias to express a model, as model can manifest itself in different forms from differential equations to deep learning.

Definition: Given inductive bias $\mathscr{M}$ formed by $n$ datasets with monotonically increasing sizes $\mathbb{T} = \{|\mathbb{T}_{0}| > |\mathbb{T}_{1}| > ...> |\mathbb{T}_{n}| \}$. A learning curve $\mathscr{L}$ for $\mathscr{M}$ is expressed by the performance measure of the model over datasets, $\mathbb{p} = \{ p_{0}, p_{1}, ... p_{n} \}$, hence $\mathscr{L}$ is a curve on the plane of $(\mathbb{T}, p)$.

By this definition, we deduce that $\mathscr{M}$ learns if $\mathscr{L}$ increases monotonically.

A generalisation gap is defined as follows.

Definition: Generalisation gap for inductive bias $\mathscr{M}$ is the difference between its' learning curve $\mathscr{L}$ and the learning curve of the unseen datasets, i.e., so-called training, $\mathscr{L}^{train}$. The difference can be simple difference, or a measure differentiating the gap.

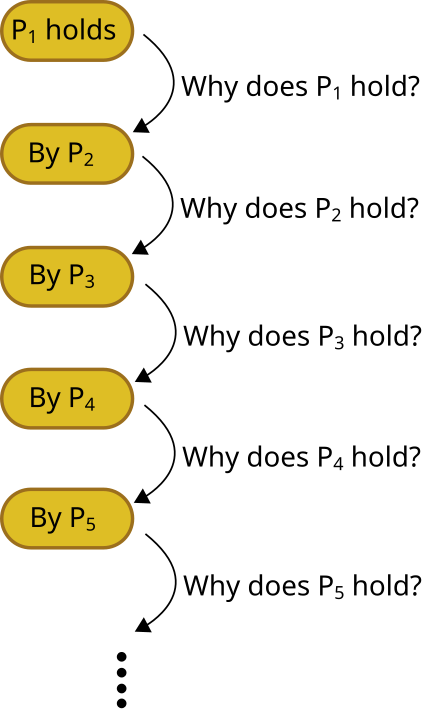

We conjecture the following.

Conjecture: Generalisation gap can't identify if $\mathscr{M}$ is an overfitted model. Overfitting is about Occam's razor, and requires a pairwise comparison between two inductive biases of different complexities.

As conjecture suggests that generalisation gap is not about overfitting, despite the common misconception. Then, why the misconception? The misconception lies on the confusion of how to produce the curve that we could judge overfitting.

Occam Curves: Overfitting Gap [Occam's Gap]

In the case of generating Occam curves, a complexity measure $\mathscr{C}$ over different inductive biases $\mathscr{M_{i}}$ plays a role. Then the definition reads.

Definition: Given $m$ inductive bias $\mathscr{M_{i}}$ formed by $n$ datasets with monotonically increasing sizes $\mathbb{T} = \{|\mathbb{T}_{0}| > |\mathbb{T}_{1}| > ...> |\mathbb{T}_{n}| \}$. An Occam curve $\mathscr{O}$ for a given $\mathscr{M}$ is expressed by the performance measure of the model over complexity-dataset size points $\mathbb{F} = [(|\mathbb{T}_{0}|, \mathscr{C}), (|\mathbb{T}_{1}| , \mathscr{C}), ..., (|\mathbb{T}_{n}| , \mathscr{C}) ] $; Performance of a given inductive bias reads $\mathbb{p} = \{ p_{0}, p_{1}, ... p_{n} \}$, hence Occam curve, $\mathscr{O}$ is a curve on the plane of $(\mathbb{F}, p)$.

Given definition, producing Occam curves are more complicated than simply plotting test and train curves over batches. The ordering in $\mathbb{F}$ forms what is so-called goodness of rank.

Summary and take home

Resolution of misconception of overfitting lies in producing Occam curves to judge the bias-variance tradeoff, not the learning curves of a single model.

Further reading & notes

- Further posts and a glossary : The concept of overgeneralisation and goodness of rank.

- Double decent phenomenon, it uses Occam's curves, not learning curves.

- We use dataset size as an interpretation of increasing experience, there could be other ways of expressing a gained experience, but we take the most obvious evidence.

Please cite as follows:

@misc{suezen23rmo,

title = {Resolution of misconception of overfitting: Differentiating learning curves from Occam curves},

author = {Mehmet Süzen},

year = {2023}

}

Postscript notes

Take home messages

Understanding Generalisation Gap and Occam’s gap

Model selection and evaluations are usually confused by novice and as well as experienced data scientists and professionals doing modelling. There are a lot of misconceptions in the literature, but in practice primary take home messages can be summarised as follows:

1. What is a model? A model is an “inductive bias” of the modeller, a selected parametrised functions for example, a neural network architecture choice. Contrary to many, specific parametrisation of a model (deep learning architecture) is not a different model.

2. A model’s test and training performance difference is about generalisation gap. Overfitting and under-fitting is not about generalisation gap.

3. Overfitting or under-fitting is a comparison problem: How a model deviates from a reference model? This is called Occam’s gap or so called model selection error.

4. Occam’s gap generalises Empirical Risk minimisation over a learning curve. Empirical risk minimisation itself is not about learning.

How and when a model generalises well and generalisation of empirical risk minimisation are currently an open research topics.

._Fil%C3%B3sofo_ingl%C3%AAs%2C_tamb%C3%A9m_conhecido_como_o_%22doutor_invenc%C3%ADvel%22_(Doctor_Invincibilis)_e_o_%22iniciador_vener%C3%A1vel%22_(Venerabilis_Inceptor)%2C.jpg)